Overview

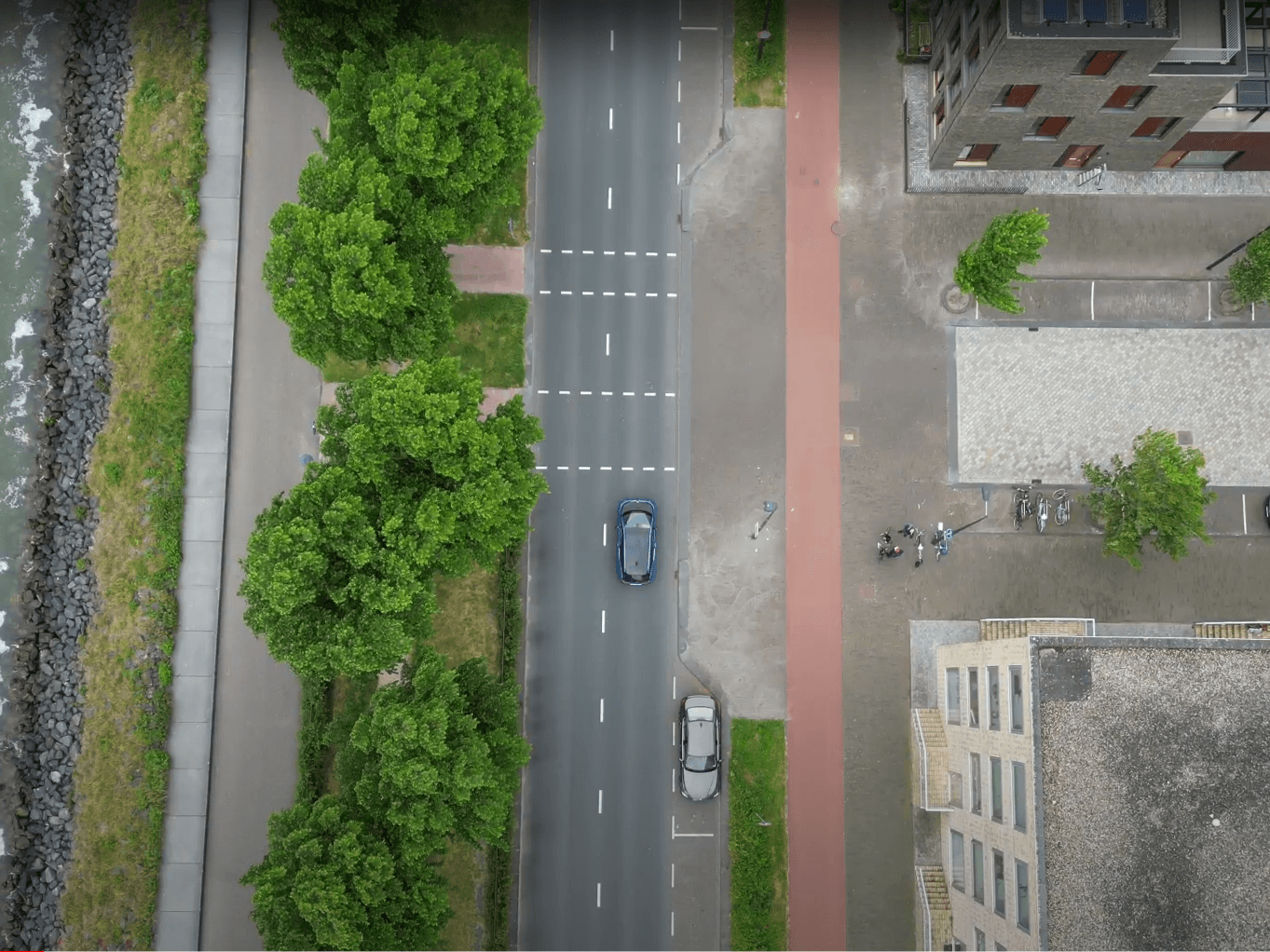

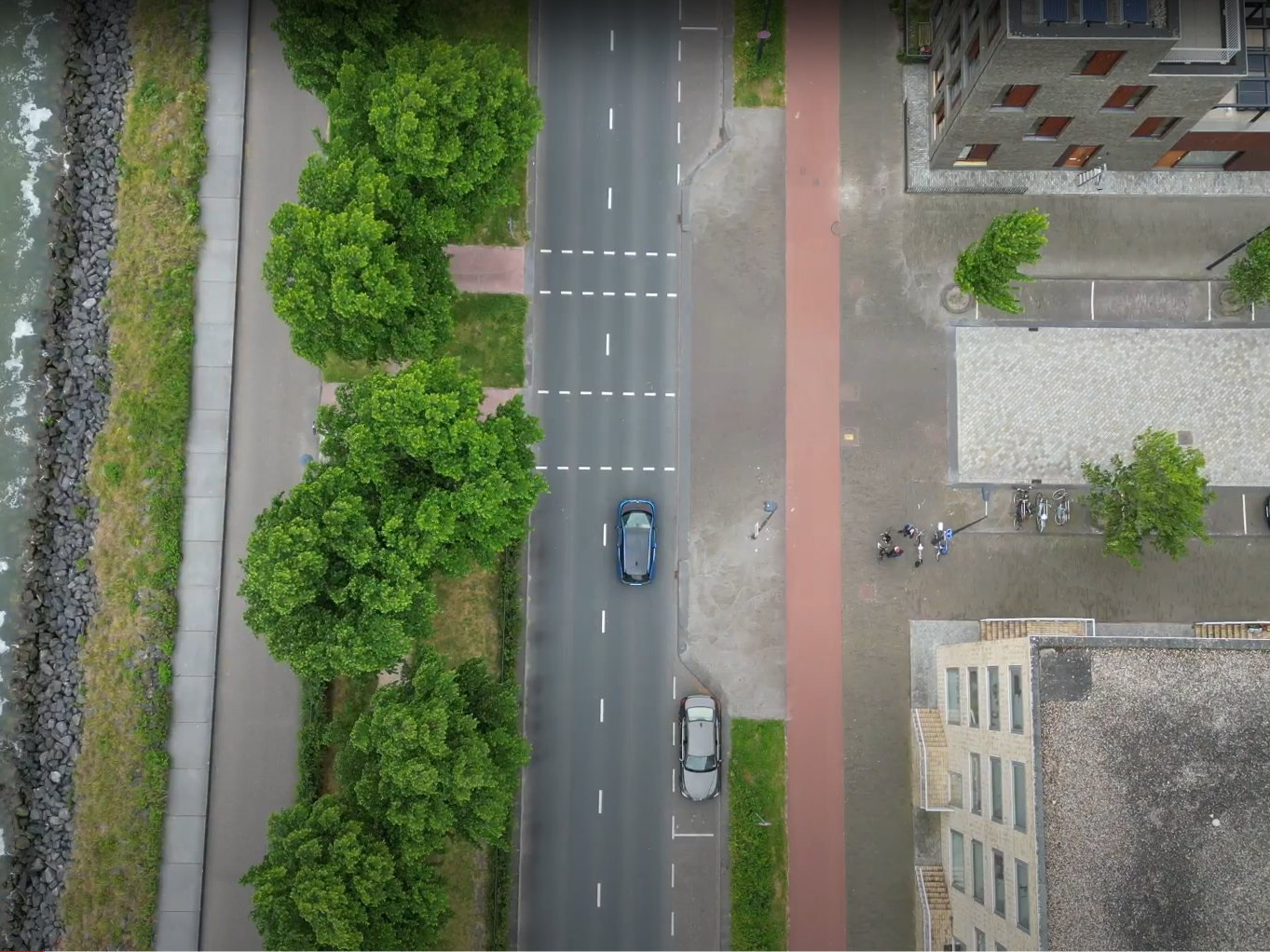

My bachelor's thesis at Universiteit van Amsterdam, built for Saivvy, an Amsterdam company developing a phone app that gives cyclists a bird's-eye view of their surroundings by transforming ground-view bike-camera footage into a top-down perspective. The bottleneck was data: there was no real-world BEV dataset that matched the cyclist setting. Group of three with Macha Meijer and Rens den Braber.

The project's job was to build that dataset, train a segmentation + detection + tracking stack on it, and hand the output to Saivvy in the format their mapping model needed.

Data collection

The dataset is the project. Most of the work lives upstream of the model.

- Drone hardware. DJI Mini 3 Pro flying at 60 metres, auto-locked to follow the bike. 60m was the resolution sweet spot: higher and objects became too small to annotate; lower and the field of view was too narrow to be useful.

- Camera sync. Phone running an NTP-time app on the bike, synchronised against the same NTP server as a Raspberry Pi controlling the side-view cameras. Time difference between drone and ground cameras worked out to 10–20 ms, which we accounted for during alignment.

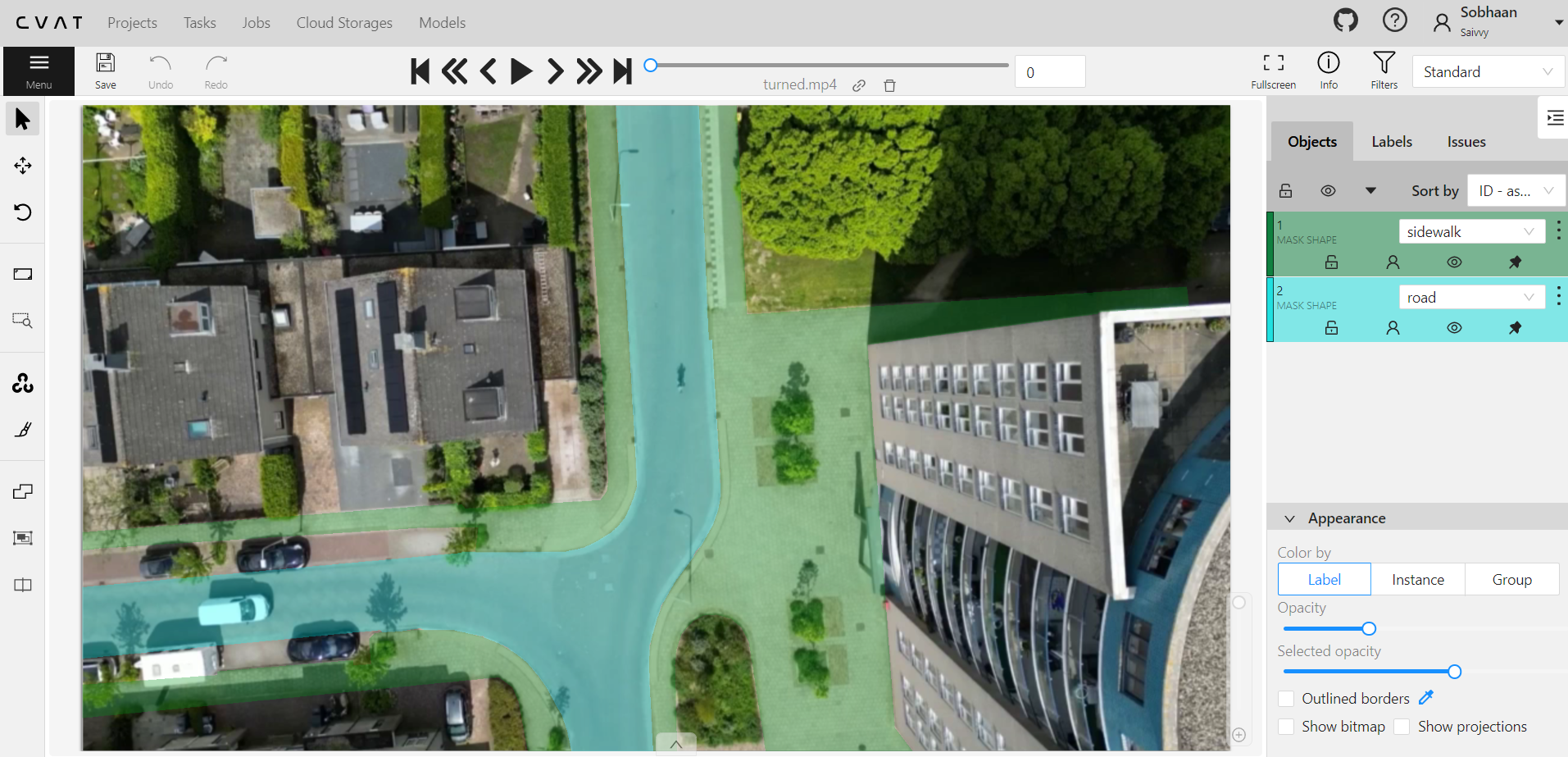

- Annotation infrastructure. Hosted CVAT instance on a server we set up. Annotation tasks were 10-second clips at 30fps, so 300 frames each.

- Splits. 70 / 20 / 10 train / val / test, with geographically separated test locations so the test set is truly held-out, not just temporally split footage from the same intersections.

- Coverage. Residential intersections, traffic-light approaches, occluded roads: the urban scenes a cyclist actually rides through.

Models

Segmentation (7 classes)

Three architectures, all via OpenMMLab:

| Model | Recall | Precision | F1 | mIoU | | :-- | :-- | :-- | :-- | :-- | | Segformer (Mit-B1) | 59.6 | 41.8 | 43.4 | 30.5 | | FCN (ResNet) | 55.8 | 38.0 | 35.8 | 24.5 | | PointRend (ResNet) | 53.1 | 37.4 | 35.3 | 23.5 |

Segformer was the strongest overall and is what shipped to Saivvy. The interesting per-class result: FCN hit 94.4% recall on pedestrian crossings specifically, trading general performance for that one class. Continuous and non-continuous lane lines were the hardest classes for everyone (precisions in single digits for some models), since they're thin and easily lost at this resolution.

Detection (12 classes)

Three architectures tested: ROI Transformer, ReDet, Swin Transformer. All three were trained on a 24 GB RTX 3090 with SGD (momentum 0.9, weight decay 1e-4, 500-iter linear warm-up). The ROI-Swin combination was the best across recall, precision, F1, and mAP, so it was chosen for the final pipeline.

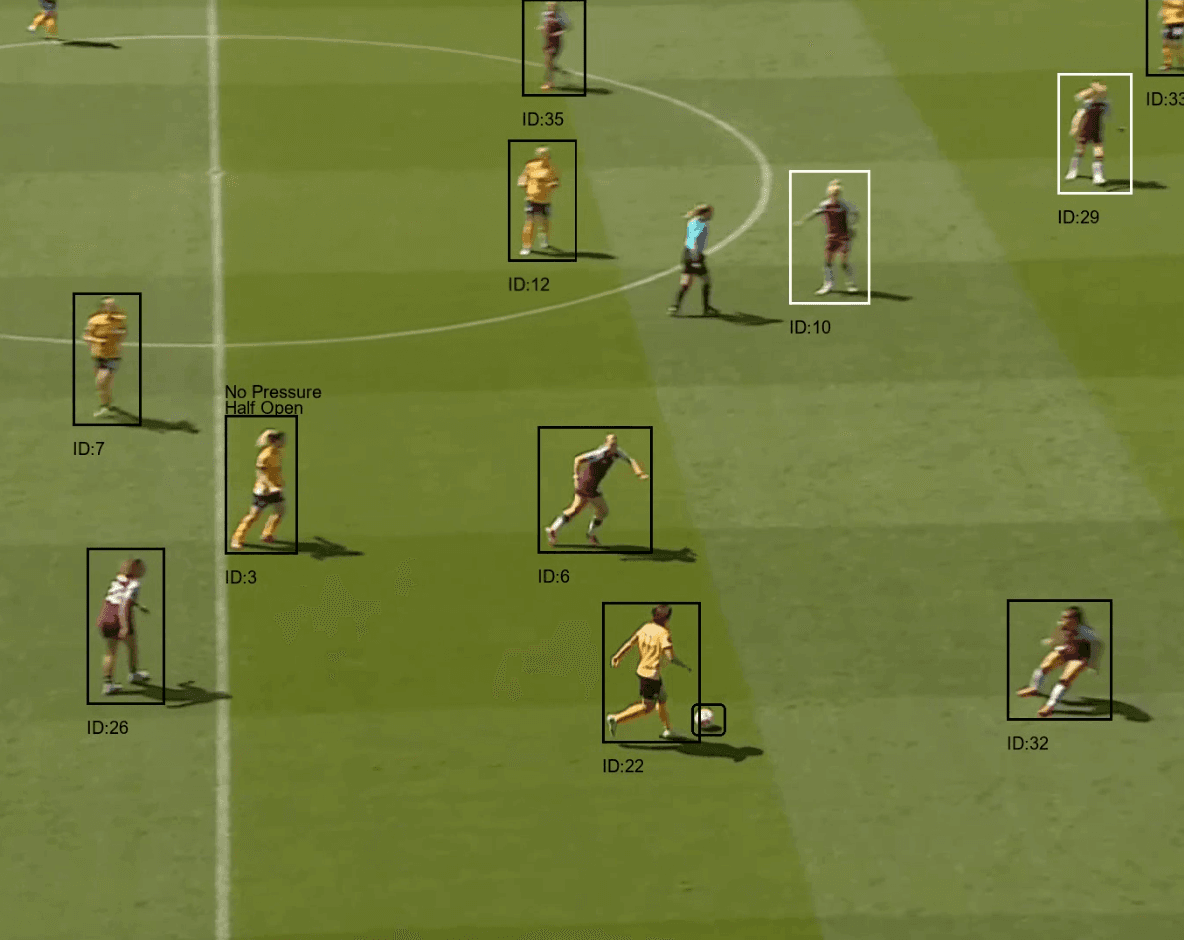

Tracking

A custom rule-based tracker bridges detection bounding boxes across frames. Tuned by sweeping the IoU threshold and counting fully-correct tracks against ground-truth tracks. The honest limitation: the tracker isn't itself a learned model, so it can't be improved by adding data.

What was hard

- Annotation throughput. Each 10-second clip is 300 frames. Most of the project's calendar time went to annotation, not modelling.

- Data scarcity. The whole point of the project was that there was no existing dataset. Models were trained on what we annotated, and the ceiling on results was set by that.

- Sim2real gap. Most BEV literature uses simulated data. Real drone footage avoids the gap but introduces motion blur, weather variance, and GPS drift that simulated datasets don't have.

What shipped

A drone-captured BEV dataset, a Segformer segmentation pipeline, an ROI-Swin detection pipeline, a tracker that produces stable IDs across frames, and a post-processing script that exports everything in Saivvy's mapping-model format.

Stack

Python, OpenMMLab (Segformer, FCN, PointRend, ROI Transformer, ReDet, Swin), CVAT (self-hosted), DJI Mini 3 Pro, Raspberry Pi (camera sync), NTP.