Overview

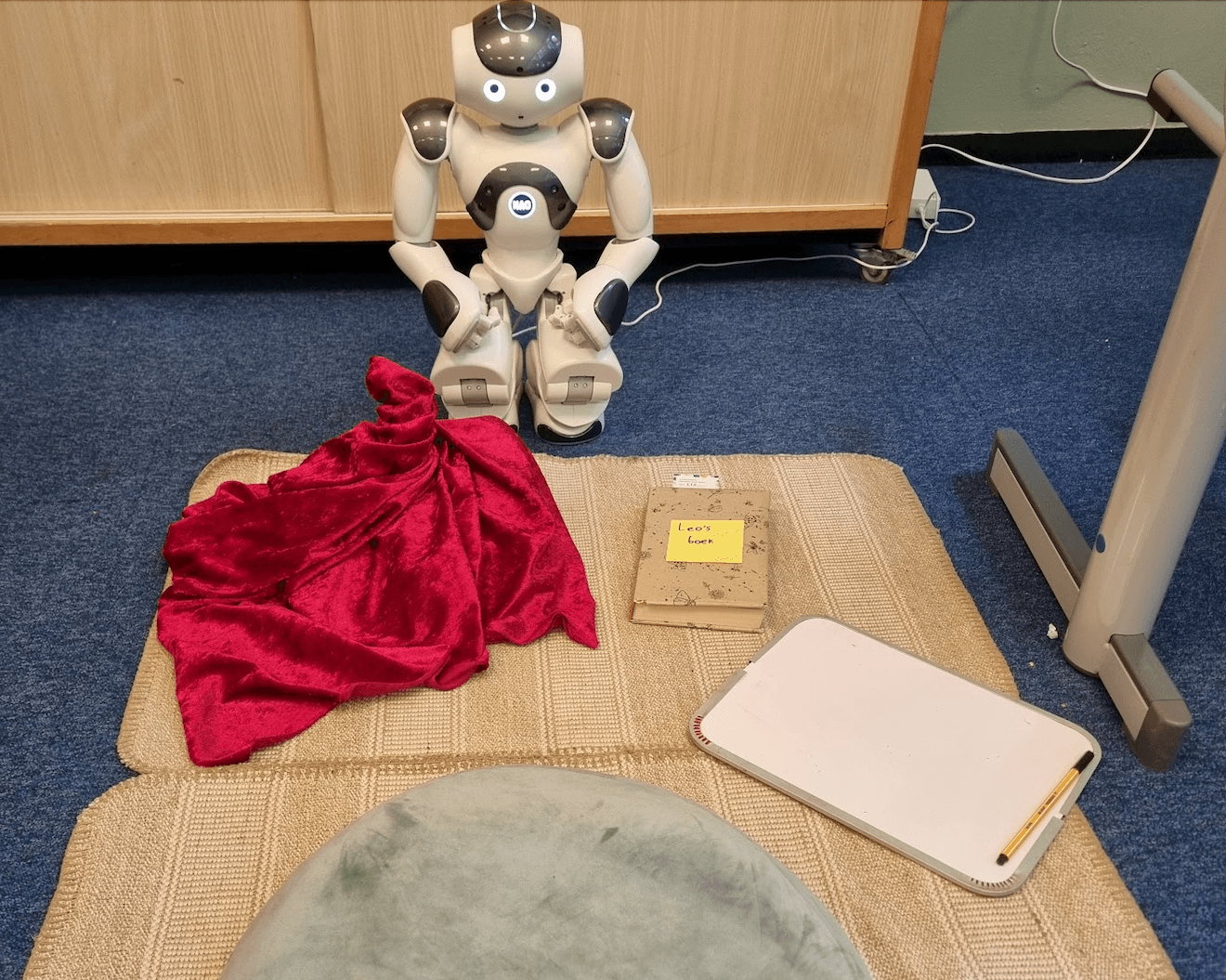

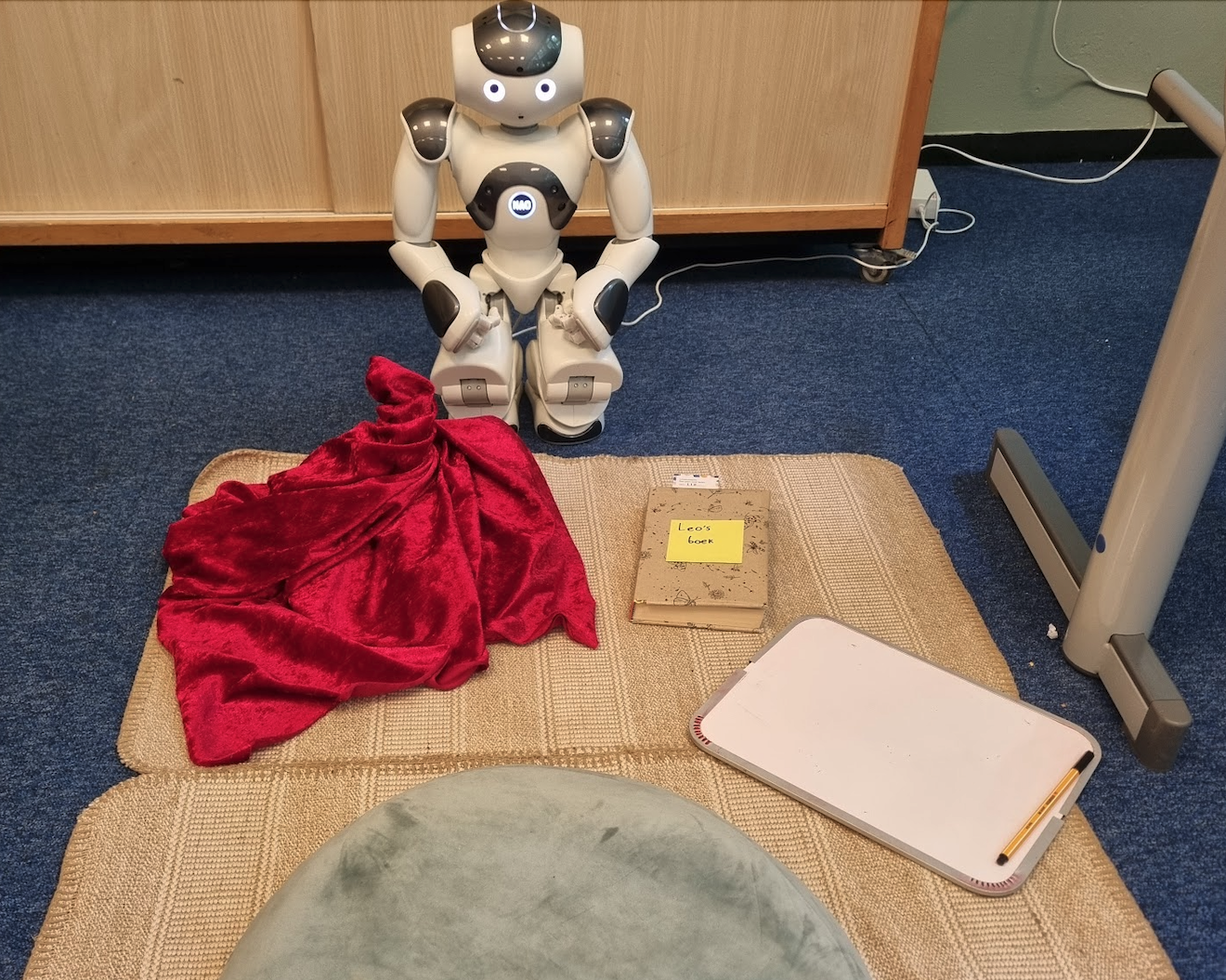

Research-assistant work at Vrije Universiteit Amsterdam from October 2023 to July 2024, on a multi-session intervention with Leo, a NAO social robot, in two primary schools. The premise: rather than treating reading motivation as a content problem ("which book?"), treat it as a relationship problem. Leo helps the child build a personal connection to a book through structured book discussions across four sessions. The intervention vs. control comparison was run with around 100 children aged 8 to 11.

Published: The Robot Bookworm: Fostering Children's Reading Motivation through Personalized Book Discussions at ACM/IEEE HRI '26 (Edinburgh, March 2026).

What the system actually does

Leo runs a four-session interaction structured as a dialogic IRF pattern (Initiation → Response → Feedback), adapted from dialogic reading research. Each child gets a personally-fitting book, chosen via a co-design process upstream. Across the four sessions, Leo opens with an observation, an opinion, or a joke about the book and then asks the child a related, autonomy-supporting question.

The interaction is built on a slot-based dialogue script with branches and persistent memory, so Leo can refer back to information the child shared in earlier sessions. That's what makes it feel like the robot has actually been reading along.

The AI pipeline (the part of this project that lives or dies on engineering)

The intervention condition uses a hybrid agentic AI pipeline:

- GPT-4o generates dialogic content offline for each child, using prompts that combine the user model, book metadata, and session context.

- For each IRF slot, the model produces several candidate utterances.

- An automatic validator scores them for safety, age-appropriateness, and task fit.

- A human moderator selects or lightly edits the final version.

- Validated content gets stored per child and scheduled into the slot-based script.

The control condition uses pre-written content only. Same script structure, same robot, same child; only the dialogic content differs. That's the cleanest possible isolation of the intervention.

The reason for the validator + human moderator stack is the obvious one: child-facing generative dialogue has real risks (inappropriate content, factual hallucinations, off-topic drift). The pipeline makes the trade-off conservatively. Generation is offline, validated, and human-checked before the child ever sees it.

Results

- Children in the intervention reported Leo engaged them in book-related dialogue more than the control: OR = 2.50 [1.20, 5.20], p = .01.

- They felt Leo had more credibly read the book: OR = 3.97 [1.87, 8.50], p < .001.

- Reader–book relatedness was higher in the personalized condition: d = 0.40, with 63% of children reporting high relatedness vs. 49% in the control.

- Book-dialogue quality correlated with both relatedness and overall enjoyment (Spearman ρ ≈ 0.55 and 0.52, both p < .001).

Children rated Leo's observations and reflective questions highly (mean > 4); humour landed slightly lower (mean > 3.5) but was still appreciated.

My role

Worked across the full project lifecycle:

- Experiment design. Helping shape the mixed factorial setup, the intervention vs. control split, and the four-session structure.

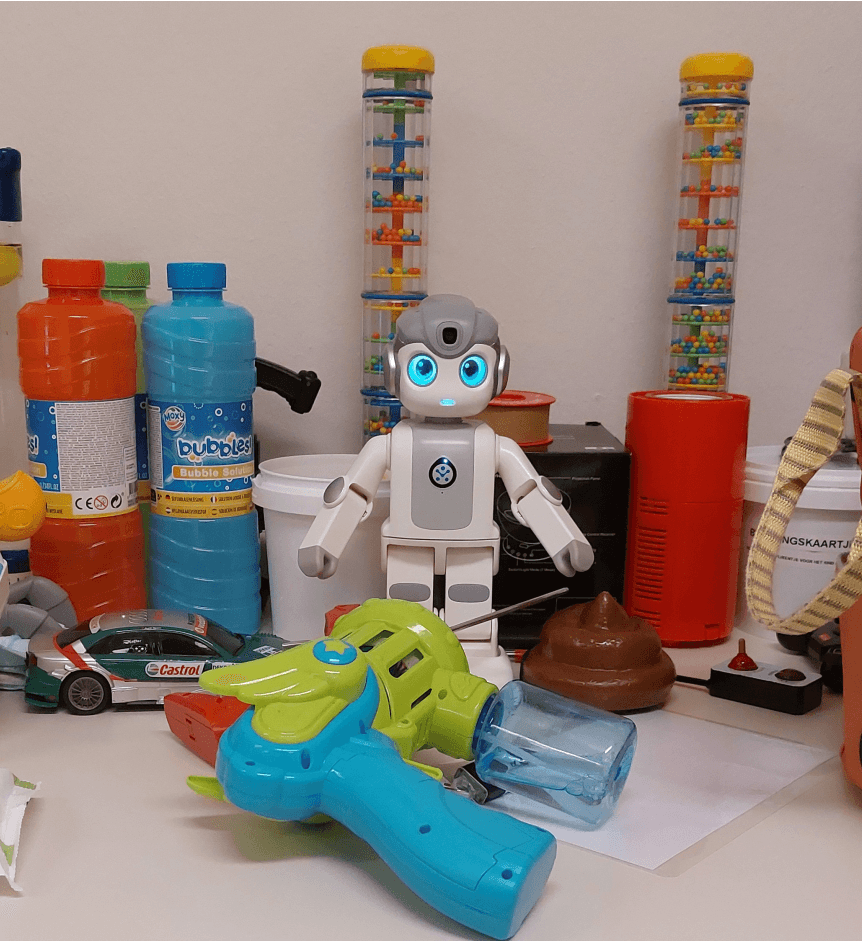

- Focus groups. Running upstream sessions with primary-school educators (six teachers, one principal, one librarian) and the co-design workshops with children that informed the intervention concept.

- Script implementation. Building out the slot-based dialogue scripts and the branch / persistent-memory interaction logic that runs Leo across the four sessions.

- Recruitment & consent. School liaison: coordinating with both primary schools, scheduling, and running the parent / child consent workflow.

- Data collection runs. Audio and video setup for each session, behavioral logging during interactions, and the post-session questionnaire and interview workflow.

- Qualitative analysis. Whisper-based interview transcription, thematic coding of children's open-ended responses, and codebook calibration with co-coders to keep coding consistent across the team.

- Statistical analysis. Producing the inferential numbers reported in the paper: the OR for engagement and "Leo read the book" credibility, the d = 0.40 effect on reader-book relatedness, and the Spearman ρ correlations between dialogue quality and enjoyment.

Stack

NAO robot (NAOqi SDK), GPT-4o, Python, content validation pipeline, slot-based dialogue scripting, mixed factorial study design, thematic analysis (Whisper for interview transcription).